Inflatable Whole-Body Robotic Skin with Internal ToF Depth Sensing

- Developed a single-piece inflatable robotic skin that provides a large geometric margin between the contact surface and the rigid frame, enabling contact detection before impact reaches the rigid structure.

- Designed custom ToF sensor modules with tilted orientation to achieve full-body coverage without blind spots using a minimal number of sensors.

- Trained a kinematics-based point-cloud predictor to estimate nominal skin geometry, enabling motion-agnostic contact localization by detecting deviations from the predicted surface.

Tactile-proprioceptive sensor fusion for whole-body physical human-robot interaction

- Fused tactile cues with motor-current proprioception to estimate multi-axis contact forces on a skin-integrated robot arm.

- Proposed a contact-aware torque estimation method that disambiguates static friction from external forces using tactile onset detection, enabling sensitive force perception without explicit friction identification.

- Trained a temporal convolutional network (TCN) to compensate friction hysteresis during stick-slip transitions, reducing the estimation dead band by 53.9% and enabling smooth kinesthetic teaching.

Magnet-based soft robotic skin using a 3D-Printed multi-lattice structure and CNN-Based tactile super-resolution

- We developed a magnet-embedded TPU multi-lattice structure fabricated via SLS 3D printing, enabling large-area tactile sensing with overlapping receptive fields and minimal blind spots.

- Designed a sparse 4x4 Hall-effect sensor array that measures 3-axis magnetic field variations in a non-contact configuration, physically isolating sensing electronics from external stimuli.

- Implemented a CNN-based tactile super-resolution model achieving 1.13 mm mean XY localization error and 0.55 mm depth error over a 140 mm x 140 mm sensing area.

EIT-Pneumatic Hybrid Robotic Skin for Practical and Accurate Force Map Reconstruction

- Proposed a hybrid tactile sensing framework that fuses electrical impedance tomography (EIT) with pneumatic sensing, using Tikhonov-regularized reconstruction and per-pad pneumatic calibration for accurate force map estimation.

- Fabricated the entire robotic skin using only FDM 3D printing (PLA/TPU) and spray coating (carbon/metallic ink), enabling a low-cost, accessible, and scalable design for whole-body integration.

- Reduced force estimation sensitivity non-uniformity, and demonstrated chest-mounted integration on a humanoid robot with reliable multi-contact sensing at 100 Hz.

Intuitive physical interaction based on whole-body tactile data in everyday environment

- Skin-integrated robot arm is deployed on everyday environment such as kitchen.

- The whole-body tactile data is used for both tactile servoing and non-verbal commanding.

- All measurements and data exchange are performed using ROS2 and micro-ROS.

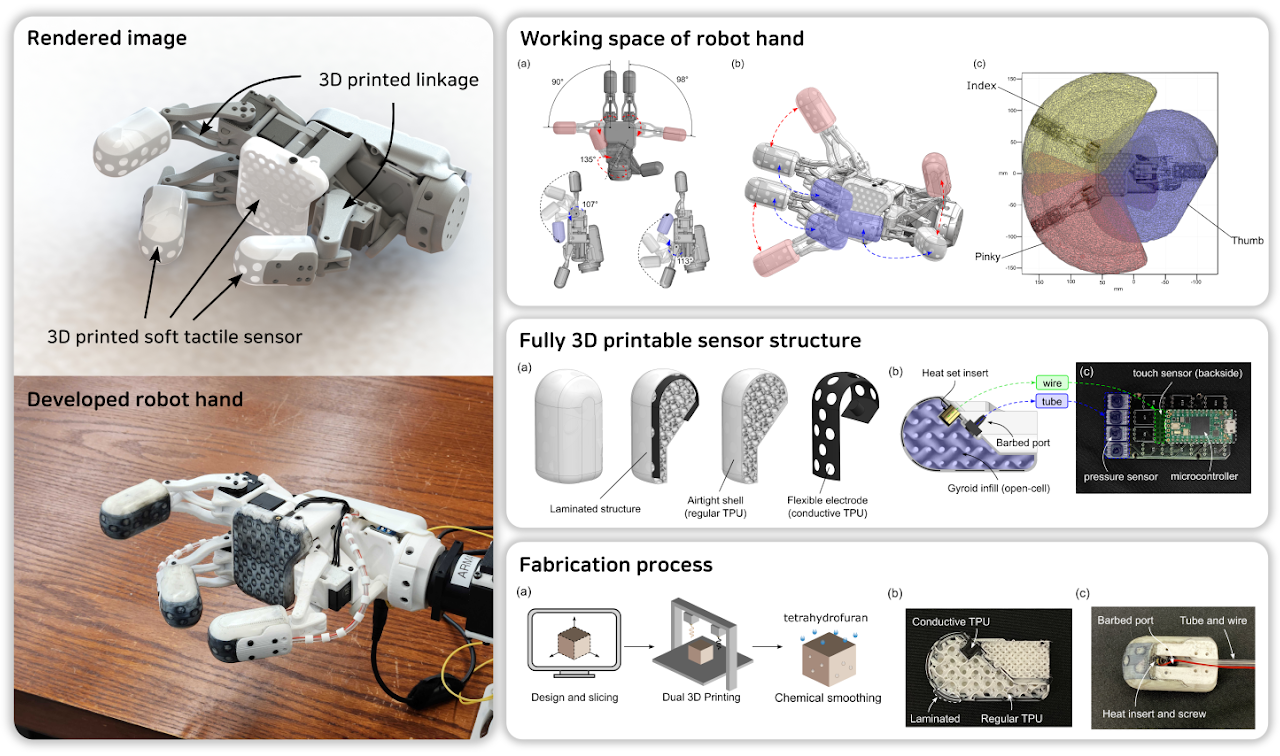

Fully 3D printable robot hand with soft tactile sensor

- Fully 3D printable robot hand equipped with soft tactile sensors are developed.

- The developed robotic hand consists solely of 3D printed parts and commercial motors, with a total cost of less than $400.

- The tactile sensors are based on pneumatic and capacitive sensors and are capable of detecting not only sensitive forces but also the presence of nearby conductive objects.

MOMO: Mobile Object Manipulation Operator

- KIMLAB demonstrated the mobile object manipulation operator (MOMO) at IROS 2023 (Detroit).

- This demonstration showcased an innovative modular mobile manipulator system, incorporating pluggable mounts that can utilize 6-DOF arms and a sensor module for different configurations, thereby enhancing the system's capabilities beyond those of a traditional serving robot.

- The primary objectives of the system encompass the autonomous removal of obstructions from the floor and seamless delivery to human recipients without any human intervention.

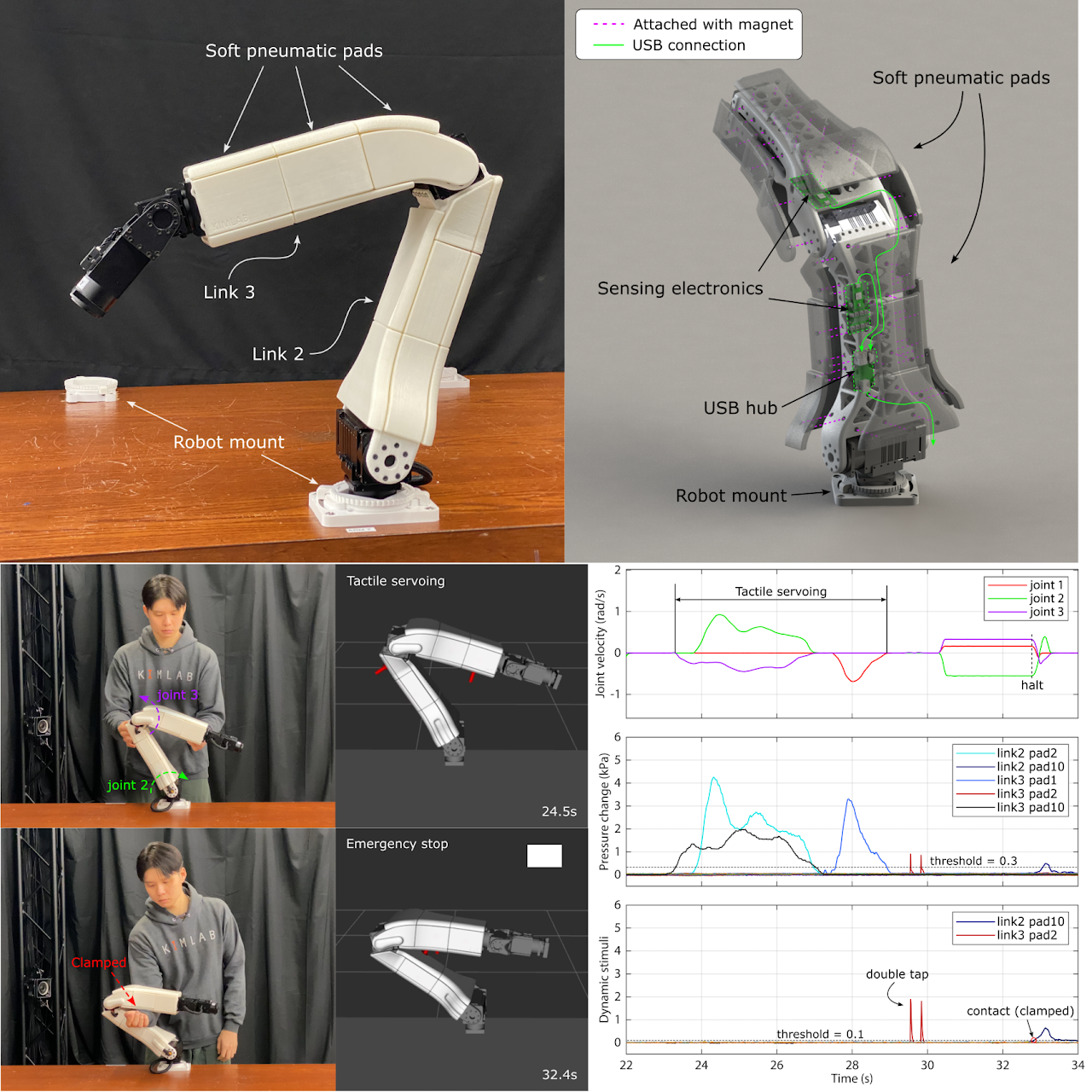

Low-cost and easy-to-build soft pneumatic robotic skin for safe and contact-rich pHRI

- Soft pneumatic robotic skin is developed for plug-and-playable robotic arm system (PAPRAS) by KIMLAB. The developed robotic skin was seamlessly integrated with the robot from both hardware and software perspectives.

- The developed soft sensing pad is cheap and easy to produce as it is entirely 3D printable. Also, the sensing electronics are designed to be compatible with ROS, making the system highly accessible to everyone.

- Soft pad's internal pressure changes due to tactile stimuli such as force and light touch. The measured signals are divided into low and high frequencies and were utilized for interaction or communication.

- The developed system was demonstrated to be capable of effectively handling situations such as joint entrapment and multiple contacts, thereby realizing safe and contact-rich pHRI.

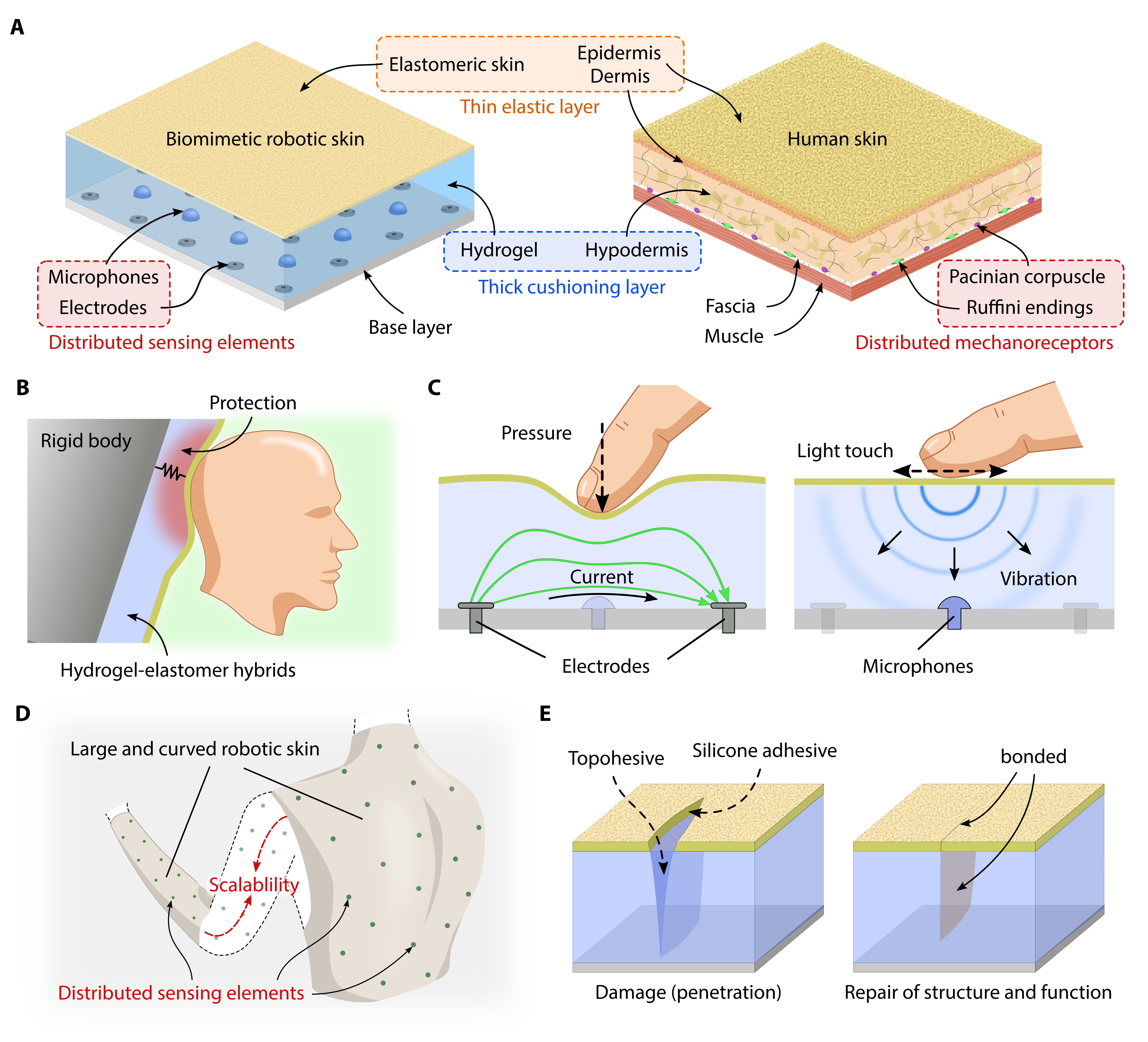

A biomimetic elastomeric robot skin using electrical impedance and acoustic tomography for tactile sensing

- Skin-inspired multi-layer structure is fabricated using silicone elastomer and ionic hydrogel, which show squish yet durable properties.

- The ionic hydrogel layer is used as a medium for tactile sensing since it can transmit alternating electric currents and vibrations.

- The tomographic imaging methods are utilized to reconstruct a multi-modal tactile image from measurement data.

- A convolutional neural network allows the robotic skin to classify the type of touch by extracting the spatio-temporal pattern of the tactile stimuli.

- The developed robotic skin can be repaired using chitosan topohesive and silicone adhesive, even after severe damage (incision).

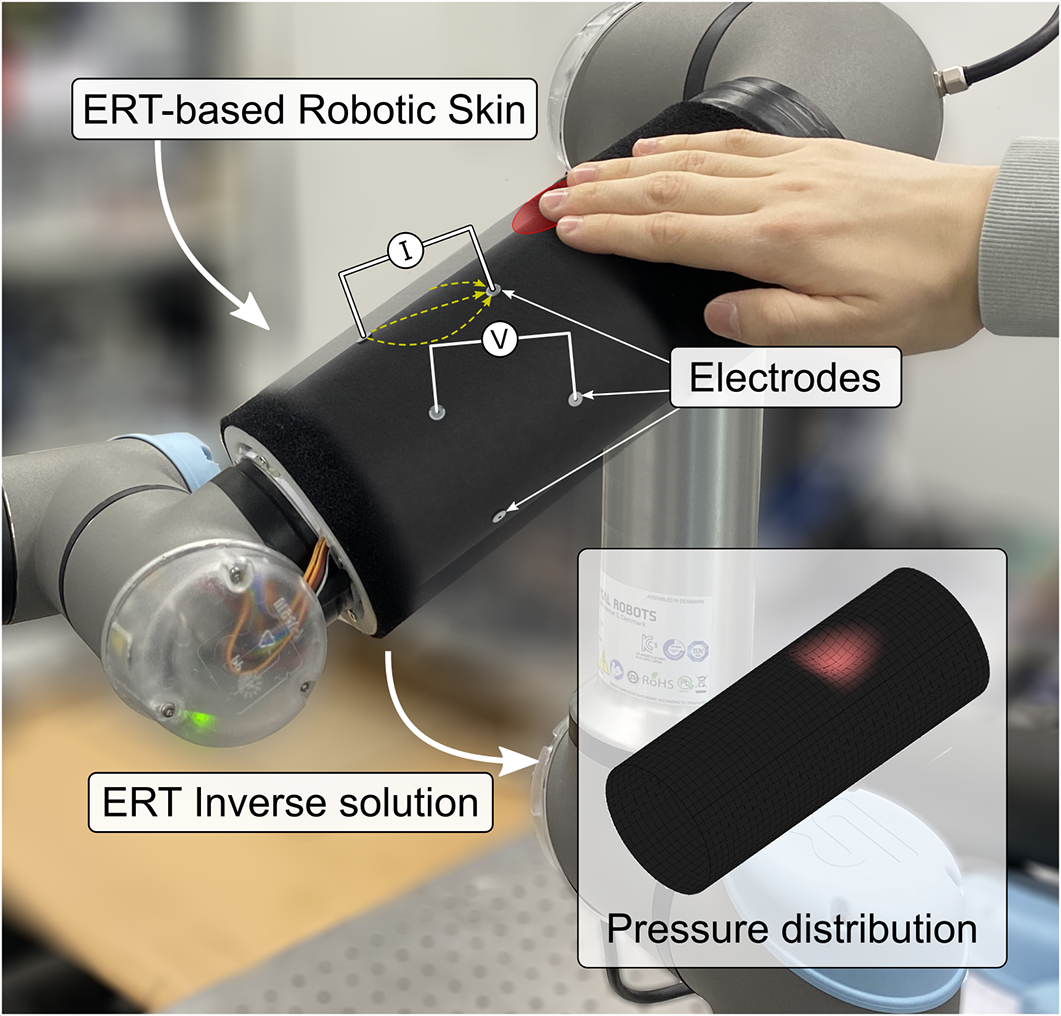

Neural-gas network-based optimal design method for ERT-based whole-body robotic skin

- We developed a method to obtain an optimal electrode arrangement for ERT-based robotic skin.

- The electrode arrangement is iteratively updated to maximize the minimum current density of the ERT-based robotic skin.

- Each electrode spreads out like a gas molecule inside the sensing domain, forming a grid-like arrangement adapted to the sensor's geometry.

- A number of electrodes can be freely adjusted to meet design requirements, owing to the automated design process.

- We implemented an ERT-based robotic skin with optimal design, and demonstrated physical human-robot interaction (pHRI).

Adaptive and Optimal Measurement Algorithm for ERT-based Large-area Tactile Sensors

- The performance of ERT-based sensors is improved by increasing the number of electrodes, but the number of measurements and the computational cost also increase.

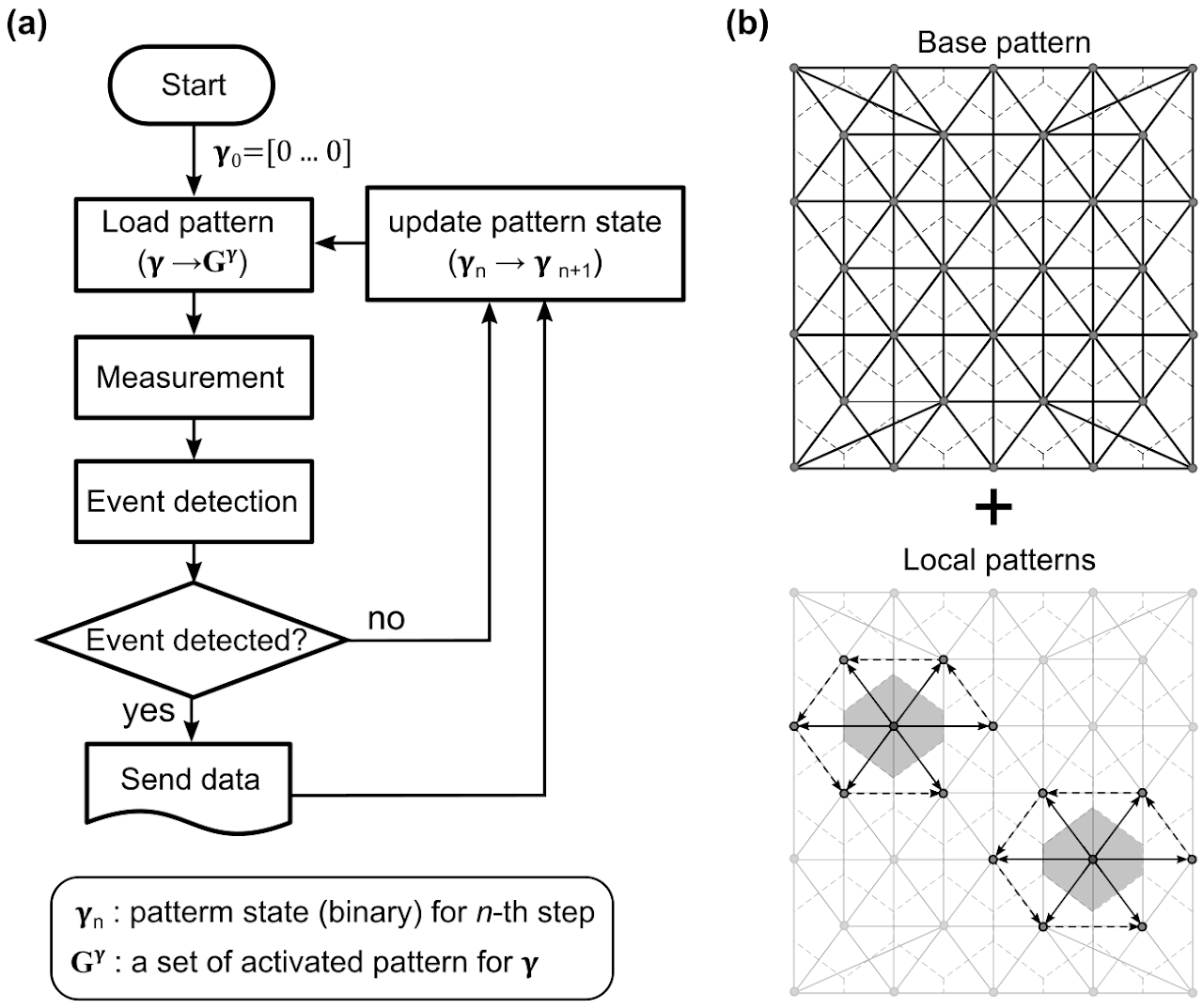

- We propose an adaptive and optimal measurement algorithm for ERT-based tactile sensors; the measurement pattern consists of a base pattern and local patterns.

- The tactile events are detected by a base pattern that maximizes the distinguishability of local conductivity changes.

- A set of local patterns are selectively recruited near the stimulated region to acquire more detailed information.

- The algorithm was implemented with a field-programmable gate array (FPGA).